Learn

|

Articles

|

Matilde Alves

|

17 April 2023

The implementation of AI in critical decision-making processes is increasing rapidly. At the same time, stakeholders struggle with different levels of familiarity with AI and unique information needs. This makes explaining model outcomes in a clear and understandable way very important but, also, a difficult operation.

In our white paper, we explore the topic of conversational explainable AI, how we came to the development of an interactive chatbot for explaining AI predictions and how this helps make AI more responsible.

AI usage is growing and being implemented in a wide range of critical decision-making processes across several domains. However, this use also comes with its challenges. Besides privacy and security concerns, stakeholders are also sometimes apprehensive on how much they can trust model decisions.

This has led to the development of multiple explainability techniques to translate machine learning model logic into something stakeholders can understand and evaluate. Still, stakeholders come from diverse backgrounds and may struggle to leverage explanations. In fact, it is hard to create a single explanation that fits all needs and requirements of different stakeholders.

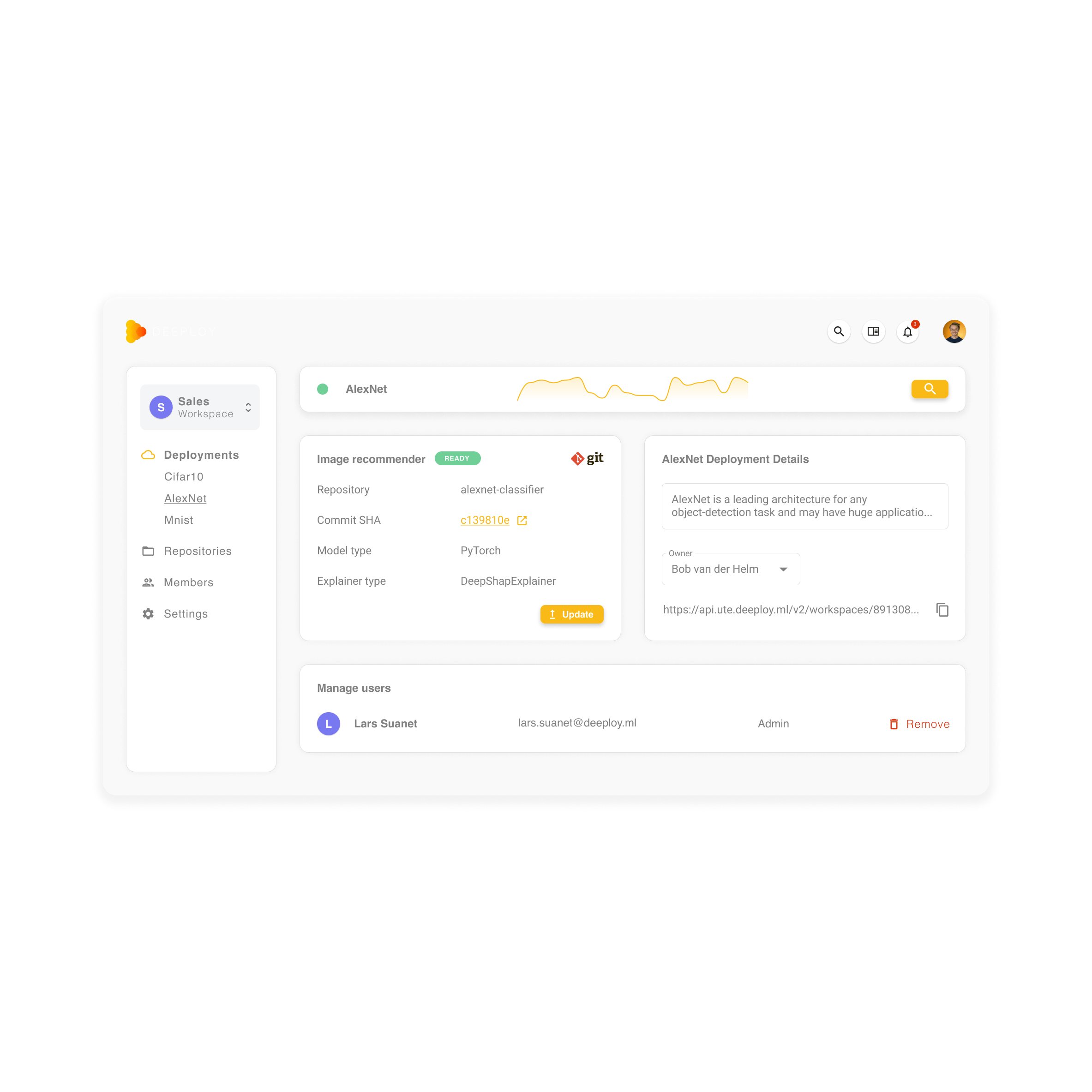

Conversational XAI (conversational explainable AI) is one possible answer to this, as it introduces an interactive, human like conversational system to the topic of explainable AI. In collaboration with Tim Kleinloog (Deeploy),Nilay Aishwarya, and Ujwal Gadiraju (TU Delft) we developed a pioneering conversational XAI chatbot to address the aforementioned concerns by providing stakeholders with the capability to obtain and interact with diverse explanations through a human-like dialogue system.

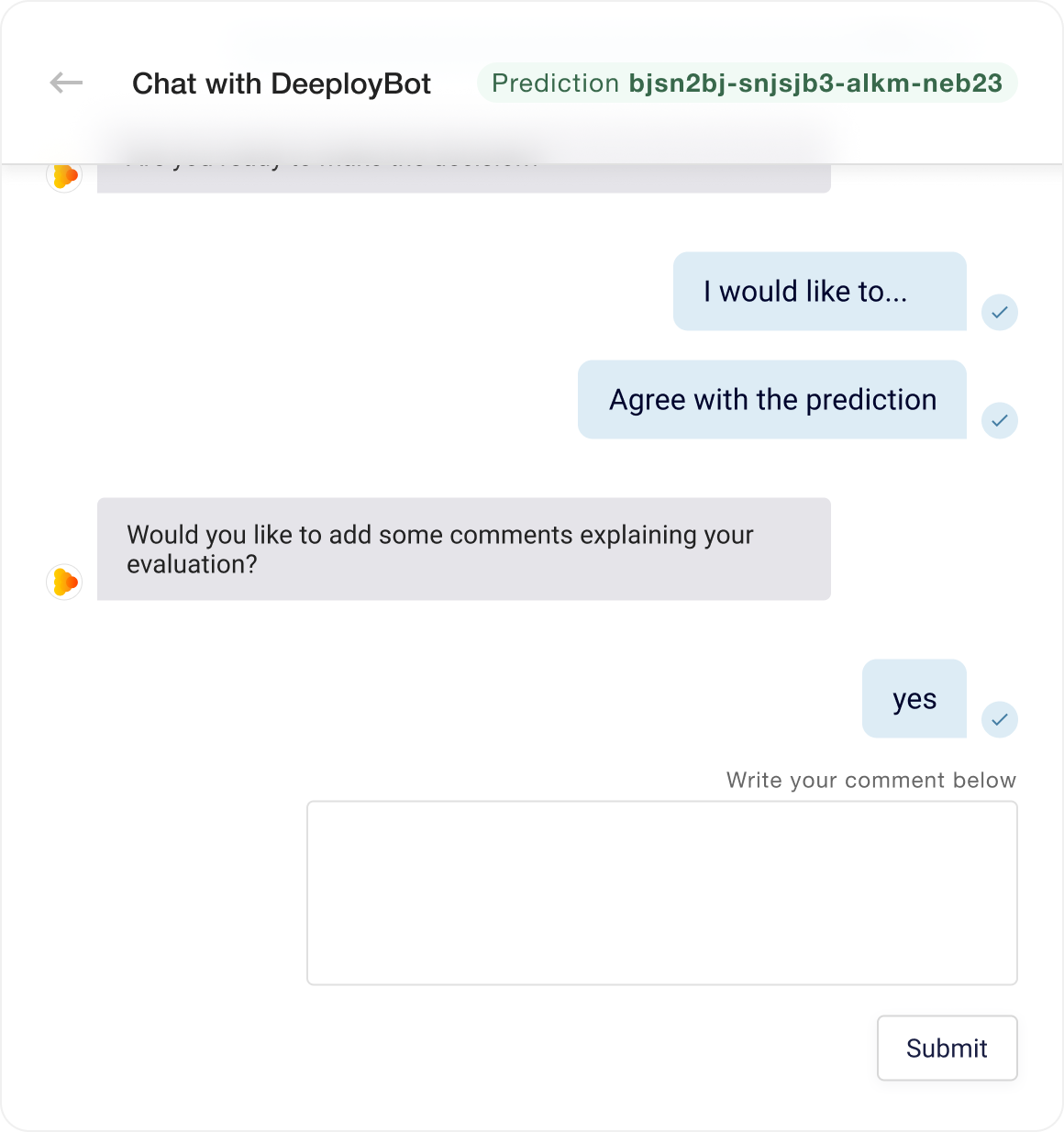

Our chatbot allows stakeholders to interact with an AI model through a natural language-based interface. After making a prediction, users can ask pre-defined questions about the prediction, such as “What would happen if you give the model different input?”.

The chatbot produces natural language responses to these questions. In addition, it incentivizes stakeholders to check out different explanation approaches, ensuring they get several angles of the available explanation, resulting in a better understanding of the prediction.

Once a user has a sufficient understanding of how the model came to a prediction, they can agree or disagree with the prediction directly through the chatbot. Furthermore, the users can describe their evaluation of the decision, and highlight the key features that helped them come to their verdict. The chatbot gathers and saves such insights, aiding ML engineers in improving their models and explanations.

Explainability

Personalized explanations tailored to specific needs and displaying only the information you really need

Human Dialogue

Interact through human dialogue that feels natural, even to those without a technical background

Complete Oversight

Practice effective human oversight by allowing stakeholders to make well-informed evaluations

Responsible AI is the practice of developing and operating AI systems that are human-centered, fair, inclusive, and respectful of human rights and democracy while aiming at contributing positively to the public good.

A responsible approach to AI gives organizations the possibility to manage AI related risks and increase stakeholder trust in the implementation of these systems. In addition, it also supports organizations in staying on top of the latest developments in terms of regulations, such as the forthcoming EU AI Act.

Conversational XAI fits into the pillars of responsible AI by helping ensure human oversight. With conversation XAI, transparency and explainability as well as the integration of feedback into AI models becomes more straightforward to relevant stakeholders, stimulating collaboration and, in turn, improving both the models and their responsibility and fairness qualities.